Glad to see you, fellow architect. I’m pretty sure you’ve zeroed in on going ahead with microservices or have it in your roadmap for 2020. For that, I give you my heartiest congratulations.

You have come a long way, after all.

Reading up on what microservices really are. Understanding how microservices communicate. And finally designing the perfect architecture using event driven microservices!

That takes a lot of effort.

Now that I’m done with linking all my previous blog posts, let’s dive straight into the meat of the article 😜!

Our Journey Has Just Started

Keeping my lame jokes aside, architecting microservices is quite a task. So much so, you might feel the urge of tweeting Kelsey Hightower with the excitement of overcoming the trap of building a distributed monolith.

I’m serious. Go ahead and tweet. I’ll wait!

Architecting your application is just one side of the story. One might argue that the story begins there. What’s ahead is the tricky part of operating it.

Allow me to explain.

The success of your microservices architecture can be measured by how empowered and independent your teams are.

Sure you’ll have a parental oversight. But if things go well, you’ll quickly find your teams building new microservices on top of the platform you create.

And before you know it, you’ll find yourself in the middle of a microservices boom.

That’s a good thing. You want that, don’t you?

But let’s say after reading your tweet, Kelsey asks you how you track your microservice dependency tree? What do you do now? You got to answer after that bold tweet of yours.

Well, if you are deep into the documentation as me, you’ll pull out your beautiful chart, and with pride, show how things are intended to work.

Strangely, Kelsey doesn’t seem impressed. He fires back with a follow-up question - “How do you make sure that the dependency tree remains that way? What controls you have in place?".

I bet you didn’t see that coming?

Don’t get disheartened by his excellent point. He’s Kelsey Hightower after all!

But seriously, what are the controls we have in place to make sure things don’t turn into the wild west?

This is just one of the many points which we need to answer!

What Comes After Designing an Architecture?

Let’s go through some of the aspects really quick. We need to figure out:

- Controls to prevent services from talking to the sensitive parts of our application - (Authentication & Authorization)

- How to set up routing rules to test out different versions of our microservices - (Traffic Splitting)

- A method to observe the traffic to make proactive decisions before things fail - (Observability)

There are more things like testing and deploying microservices. But for now, let’s try and answer the points I mentioned above.

And no!!! You cannot just use an API Gateway and call it a day. That won’t work. I want you tell me why is that true. Waiting for your comments!

1) Sevice authentication & authorization

Let’s say you have a service which manages the billing details of your customers. It could probably be storing sensitive stuff like credit card details and other dangerous information.

Okay okay. I know that information isn’t dangerous. But a bootstraped startup like mine can’t help but freak out after checking out the fines that laws like GDPR come with.

Coming back to our billing microservice. Would you want anyone in your company to invoke APIs of such a sensitive service?

I know what you are thinking here, I’ll code the authorization piece directly into my microservices! To that I say,

While security is everyone’s responsibility, the weight of implementing security shouldn’t rest on developers.

Think about it. Is it a good idea to task developers with something like this? What if you want to change things tomorrow? You can’t sit and keep refactoring your code.

Implementing service authentication and authorization should be the platform’s responsibility. Your platform should have the knobs to configure communication policies.

The one who really understands and is in charge of security can use these knobs to secure our microservices.

2) Traffic Splitting

With so many microservices around, managing networking can get complicated.

I would expect the platform to let me describe the routing rules for each microservice.

And this needs to be flexible. I should be able to cover most of the use cases out there like offloading traffic to public clouds during peak loads, migrating my services from one place to another and A/B testing different versions of my microservices.

Why stop there, I also want this hypothetical router to retry automatically, take care of circuit breaking and manage client certificates.

Don’t judge me!

I’ve taken so much efforts to write this post; I deserve to demand that much!

The point is, we do need an intelligent router which can intercept our traffic to make all this possible.

Sure we don’t need this on day one. But we will need these when we start scaling. And if I’m not wrong, you started with a microservice architecture for maintaining agility at scale remember?

3) Observabilty

I just can’t emphasize how important this is! Let me try anyway.

No one is perfect!

I know we got excited and bragged to Kelsey that we avoided building a distributed monolith. But we could screw up here and there and end up with a partially distributed monolith.

As with any distributed system, debugging is difficult. What we need is network tracing!

And while we are at it, it would be great if we could get the four “golden signals” of monitoring (latency, traffic, errors, and saturation) as well.

Telemetry does sound like a privilege when starting off. But it quickly becomes the most important thing when things begin to fail.

So what should we do?

You already know what’s about to come in next. It’s in the friggin title!!!

The solution can be found in using service meshes.

Due to the way a service mesh works (using a sidecar alongside each deployment), a service mesh can observe and control all traffic that flows through it. To make it clear:

A service mesh gives us the power to solve our networking problems by decoupling the responsibility away to a sidecar proxy.

This makes out codebases easy and more decoupled than ever before. No more messing around with clunky in-house libraries.

A service mesh has a centralised control plane. This means you can have specialised teams work on things like policies and authorisation independent of code. In my opinion, this strongly resonates with the philosophy of independence that started the microservice movement in the first place.

As a bonus, service meshes drop-in mtls to fully encrypt all traffic within the mesh.

There are multiple excellent service meshes out there like Isito, Linkerd and KumaMesh, to name a few.

I have written a post on comparing some of the service meshes out there to help you decide which one you can go forward with.

Is There An Easier Way?

After digesting so much information, I know what you are thinking!

I’m too lazy to learn yet another piece of technology!

I can understand. I’m like you - a Panda 🐼 - just cuter!

So here’s a big surprise - We have built Space Cloud which abstracts all the complexities of using a service mesh behind a clean and easy to use UI!

I know I’m marketing my stuff here but bear with me for a minute.

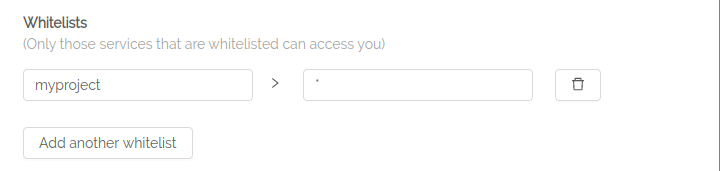

This is how easy it is to whitelist the services which are allowed to talk to a particular service. As easy as filling up a couple of text fields!

In the above example, we are whitelisting all services present in the myproject project.

Space Cloud uses Istio under the hood and takes care of all configuration.

To make things even better, Space Cloud can deploy your microservices, handle all secrets, autoscaling - including scaling it down to zero!!

And did I mention that everything is Open Source?

Enough of me bragging, check out what Space Cloud is capable of for yourself!

You can also support us in our mission by giving us a star on Github.

Hopefully one day I’ll be able to send out a tweet to Kelsey as well!